It is challenging to guess what is working and what is not. And if you guess wrong, it could be a waste of your time and resources.

So here's where A/B testing comes into play.

A/B testing different subject lines can help you identify which subject line resonated most with your audience.

For instance, you might find that a personalized subject line prompts more users to open their emails. Then you can use this tactic to increase your open and click-through rates.

Designing an email, writing a compelling email copy, and sending that email to subscribers requires money and time.

Being oblivious to which element isn't working can lead to needless expenditure. A/B testing 2 or 3 elements can help determine which feature provides a more engaging user experience. Then, you can implement those changes and reduce unnecessary costs.

A/B testing derives results backed up data, and with careful analysis, you can make changes to your current campaigns and invest money where you are getting the best return. Hence, you can minimize the chances of loss and optimize your investment.

💡 Related read: How to Optimize Your Email Program Using Strategic A/B Testing

How to decide what elements to A/B test?

Follow these steps to decide what to test in your email marketing campaigns:

1. Collect data and identify the problem

The first step to improving anything is to identify the problem. A/B testing works in the same way.

Collect your email campaign's performance data using your ESP's analytics. Then, look at the performance of your metrics over a specific period to assess which metric is not performing up to the mark.

You might get lower clicks on your promotional emails, or your conversion rate has decreased over the past week. Once you identify the issue, move on to the next step.

2. Define your goals

Identify what you want to achieve via A/B testing. Is it to get 2X conversions in a month or higher on your email newsletter?

Setting the right and achievable goal is crucial, as you can develop the right hypothesis based on these goals.

Hypotheses are the assumptions made on datasets to prove how a particular variation might perform better than the other. Keep your goals in mind while formulating your hypothesis.

For example, a hypothesis that helps give your testing a direction. If you develop multiple hypotheses, prioritize them according to your relevance.

This table summarizes the above three points with examples

| Data collection and problem identification |

Goals |

What to test (Hypothesis) |

| Email open rate is low |

Improve open rates |

Email subject line and pre-header text |

| CTOR is low |

Boost click-through rates |

Content of your email and the layout and design of your emails |

| CTOR and conversion are low |

Boost conversions and ROI |

Call-to-action |

After developing your hypothesis, you can start testing your email in the following manner:

1. Create variations of your email

Make variations in different elements of your email, such as subject line, CTA button, images, preheader text, etc.

For example - you can create two personalized subject lines or use a button as CTA rather than a link. Doing so will help you determine which version seems more engaging to your audience.

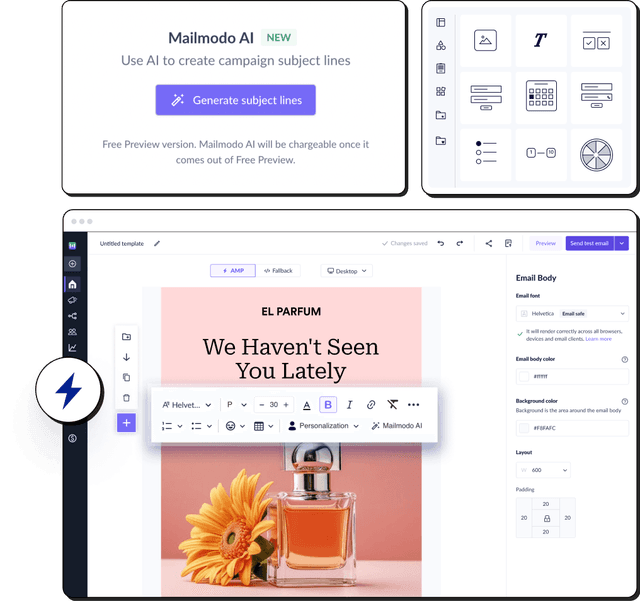

See how you can do subject line A/B testing in Mailmodo:

Step 1: Create a new campaign by selecting the new or pre-made template.

Step 2: Add details page. This is where you can add subject line variation to the test.

- Add the campaign name and subject line. Next to the subject line, press the '+' icon to add another subject line.

- Then add the preheader text, sender name, and other details.

Learn more about email personalization: An Ultimate Guide to Creating Personalized Emails

2. Run email A/B testing

Now that your email variations are ready, it's time to run the test. Kick off your trial run by sending one version to a subset of the audience and the other to a different subset. Monitor the interaction of your audience, and collect data using different metrics.

In Mailmodo, after you add the variant and the original subject line, click the 'Next' button and select the contacts you want to send the emails to. Once done, preview the details and send the campaign or schedule it for the specified time.

3. Analyze the test results

Then, compare the result of both versions and identify a champion version.

You can check the performance of both subject lines in Mailmodo's analytics dashboard. All the metrics such as delivered, opens, clicks, and submission are displayed, helping you make the analysis.

Once you get the champion version, use that to garner good returns by sending it to different segments of users.

If the results you get through the variant version are not good, try testing with a different element. This A/B test process can continue until you get the desired result.

Related guide: Why You Should Test Your Emails Before Sending Them.

How long should your A/B test run?

A question that might arise while A/B testing is how long you should run your tests.

Well, there is no definitive answer to this question.

A lot of factors contribute while deciding the duration of your A/B testing. Some of them include - your business goals, audience size, metrics you want to test, email marketing budget, etc.

A survey conducted by Mailchimp reflects the estimated time it took to generate results depending on the metrics.

- A winner was selected in 2 hours for the open rate in 80% of the cases. At the same time, they chose a champion version for the Click rate in just 1-hour.

- In the case of revenue generation, it took 12 hours to find a champion version successfully.

So, it is pretty clear that there is no pre-defined time frame for running your testing. However, letting your tests run for adequate time will give you more confidence when deciding on the champion version of your email.

Email A/B testing best practices

Your organization's success depends on how you conduct email A/B testing and draw insights from data to increase conversions. That is why you must follow the correct practices.

Here are the most important A/B testing best practices that many email marketers forget to follow:

• Test emails simultaneously

Timing of your email testing matters. There are chances that if you run your testing at different times, you may get skewed results. Thus, it is crucial to simultaneously carry out an email A/B test to get optimum results.

• Use a representative sample size of your subscribers.

Choose a suitable set of subscribers having similar interests, behaviors, locations, etc. to assess the performance of version A and version B. Testing your email by sending them to the wrong audience may not yield the desired results as different people have different preferences, interests, and they might be in a different stage in their lifecycle journey.

• Test one element at a time

A common misstep in A/B testing is that marketers try to test everything simultaneously, says Sudha Bahumanyam, Senior Principal B2B Consultant at OMC Consulting. A/B testing tests one variable and provides accurate and actionable insights.

Try to keep A/B testing limited to one element at a time to accurately assess your test's performance. If you test two-variable at a time, it might be difficult to attribute the change to a particular variable.

• Ensure results are statistically significant

It might be tempting to view the result of your subject line A/B test and assign a winner based on which email gets you the open rate. But, that decision might or might not be accurate.

To ensure your findings are reliable, you should check the statistical significance of the A/B testing results. Statistical significance measures how likely the difference in your control and test version isn't due to error or random chances.

You can use many free online significance checker tools such as Survey Monkey, VWO A/B testing significance calculator, etc.

Most ESPs like Litmus, Mailchimp, and Campaign Monitor have in-built A/B testing tools, so you do not require an additional tool to conduct email A/B testing.

Your ESPs will help you throughout the process, as we do at Mailmodo. Our customer support and product team guide customers through the nitty-gritty of A/B testing, such as what elements they should test, how to measure the results, etc.

Start A/B testing your emails today

Now, it's time to put A/B testing into practice. First, look at your email analytics and determine what you want to test in your email. And then choose tools that provide you affordability, insightful data, and the flexibility to experiment with variables.

With Mailmodo, you can edit and send interactive variations of your emails. This, in turn, will help you generate higher ROI from your email campaigns.

So, what are you waiting for? Start A/B testing today with Mailmodo.